Every firm believes its knowledge problem is a storage problem. It isn't. The constraint is upstream - in the habits, the accountability structures, and the discipline that produce structured knowledge in the first place. AI shifts the bottleneck. It doesn't remove it.

Y Combinator's Summer 2026 Request for Startups includes two ideas I recognised immediately: the Company Brain and the AI Operating System for Companies. I recognised them because I have spent the better part of 25 years building versions of both for architecture and engineering firms. YC frames them as frontier problems waiting to be solved. From where I'm standing, they are decades-old problems with a long history of partial solutions and quiet failures. YC is right that every company needs one. They are wrong about what has been stopping them.

It's the late stages of a design review to get the glazing drawings signed off before issuing them to building control. There's a question about a particular detail that no-one knows the answer to. It was copied from a previous project that used the same system, but the rationale behind it is in a calculations sheet from 2018 and the engineer has left the firm. The manufacturer's catalogue has been updated recently, but apart from the Z-plate getting slightly thicker, it all looks the same. The firm has 200 people and a knowledge base. The knowledge base doesn't help.

Company knowledge is tacit by default. It only becomes explicit when someone bothers to write it down, and that usually happens once, hurriedly, and is rarely maintained. This is tacit debt - the accumulated gap between what a firm knows and what it has made legible. Like technical debt, it compounds silently. Unlike technical debt, most firms don't know they're carrying it until they try to find something and discover the knowledge isn't there, is out of date or only partially explained.

Over the years, I have built a series of tools to capture company knowledge and make it queryable, including a knowledge base for a consultant engineers. After the first year, we added expiration dates to information pages. Not because the technology required it, but because without a forcing mechanism, wrong answers quietly outlasted correct ones. The firm had a brain. Often, the brain was lying to them. A knowledge base without governance is not a brain, it's sediment. The hardest part has always been getting busy professionals to contribute and tag things consistently, and finding someone willing to own the accuracy of it all.

Another development of mine, Sling, is a workflow/BPM tool that an architecture firm is using to manage its business development process. Making the process legible required the firm to first agree on what the workflow was - what information needs to be gathered, who needs to review that information and at what stage. That negotiation took months and was political, not technical.

In both cases, technology was the easiest part. The hard part was always elsewhere - in the humans, the habits, the accountability structures that produce knowledge in the first place.

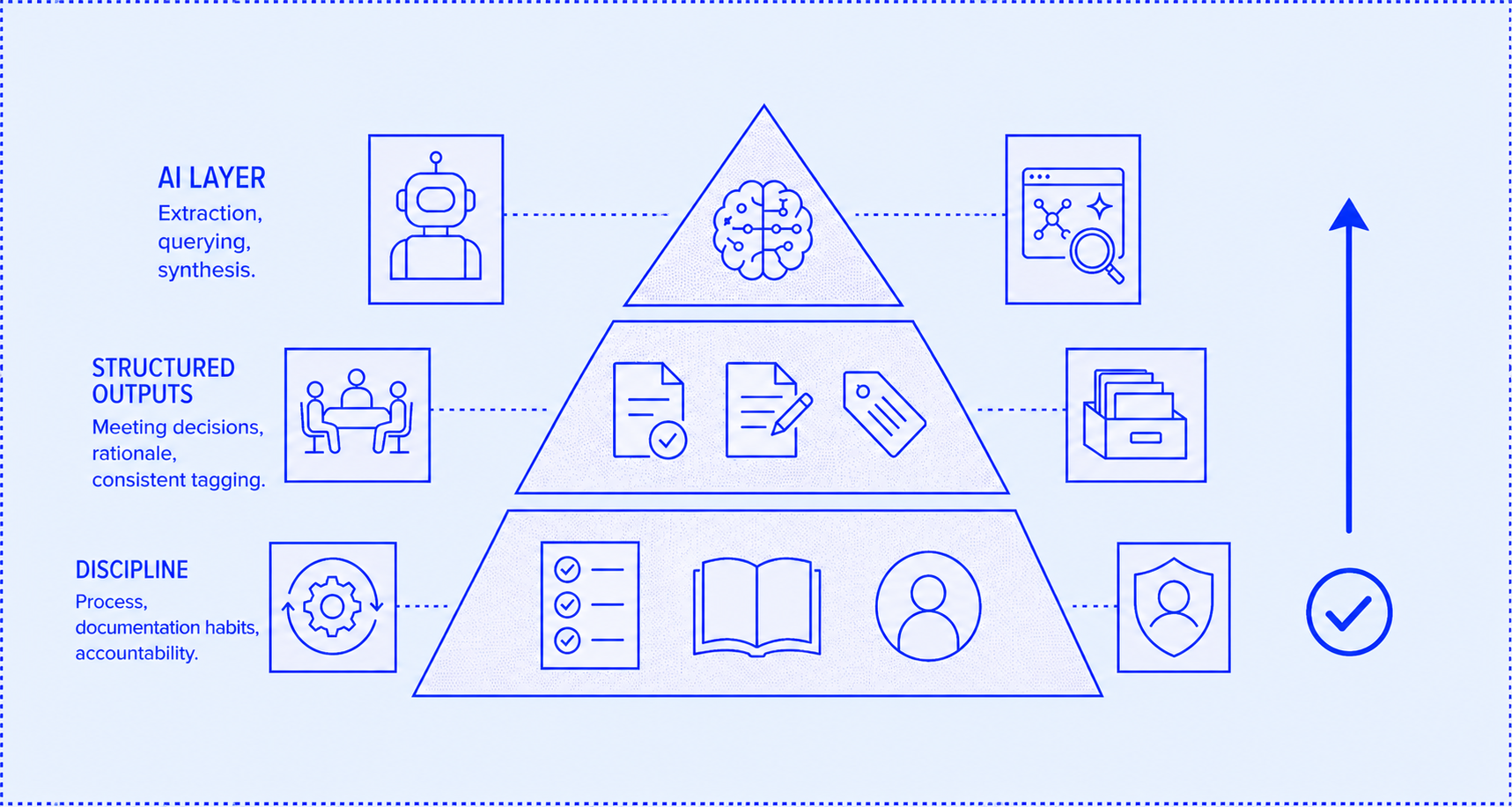

After 25 years of this work, the pattern is consistent. Every firm believes its knowledge problem is a storage problem. Build a better repository, they think, and the knowledge will flow into it. It doesn't. The real constraint is upstream: meetings produce transcripts but not decisions, drawings don't carry the rejected alternatives, processes exist in people's heads rather than on paper. The closed loop YC describes requires a firm that is already producing structured outputs. Most are not. That is not a technology gap. It is a discipline gap.

Credit where it's due. LLMs will genuinely change the economics of the knowledge capture process. You can now extract structured information from messy sources - meeting notes, emails, drawings, calculations - faster and cheaper than before. That does matter, but it shifts the bottleneck rather than removing it. The new bottleneck is the verification gap - who checks whether what the AI extracted is actually what the company believes? Who maintains the ground truth? The company brain becomes valuable when it's trusted, trusted when it's accurate, but is only accurate when someone is accountable for it.

That's an organisational design problem, not a product problem. The firms that will get the most from AI automation are not the ones who buy the best tools. They're the ones who have spent years insisting on process, documentation, and accountability - firms that were already, in some sense, building a company brain before YC called it that. The AI layer doesn't create that discipline, it rewards those that already have it.

Tacit debt doesn't clear itself when you plug in a new tool. The company brain comes second. The discipline to produce, maintain and be accountable for structured knowledge comes first - and no Request For Startups is going to fund that.

If you would like to find out more about working effectively with AI, please do get in touch.