Coding agents and LLMs have made delivery faster. But faster build hasn't compressed project timelines, because the build was only the first bottleneck. Goldratt's Theory of Constraints explains what is actually happening - and where to look for the real delay.

Recently, a client requested a small change to an existing application - a new dashboard view. Previously I wouldn't have produced a wireframe for a change this size - the build cost wouldn't justify it. But with Claude Code I could take their description, quickly sketch something out, and produce a clickable wireframe in an afternoon. It's been sitting with the client since.

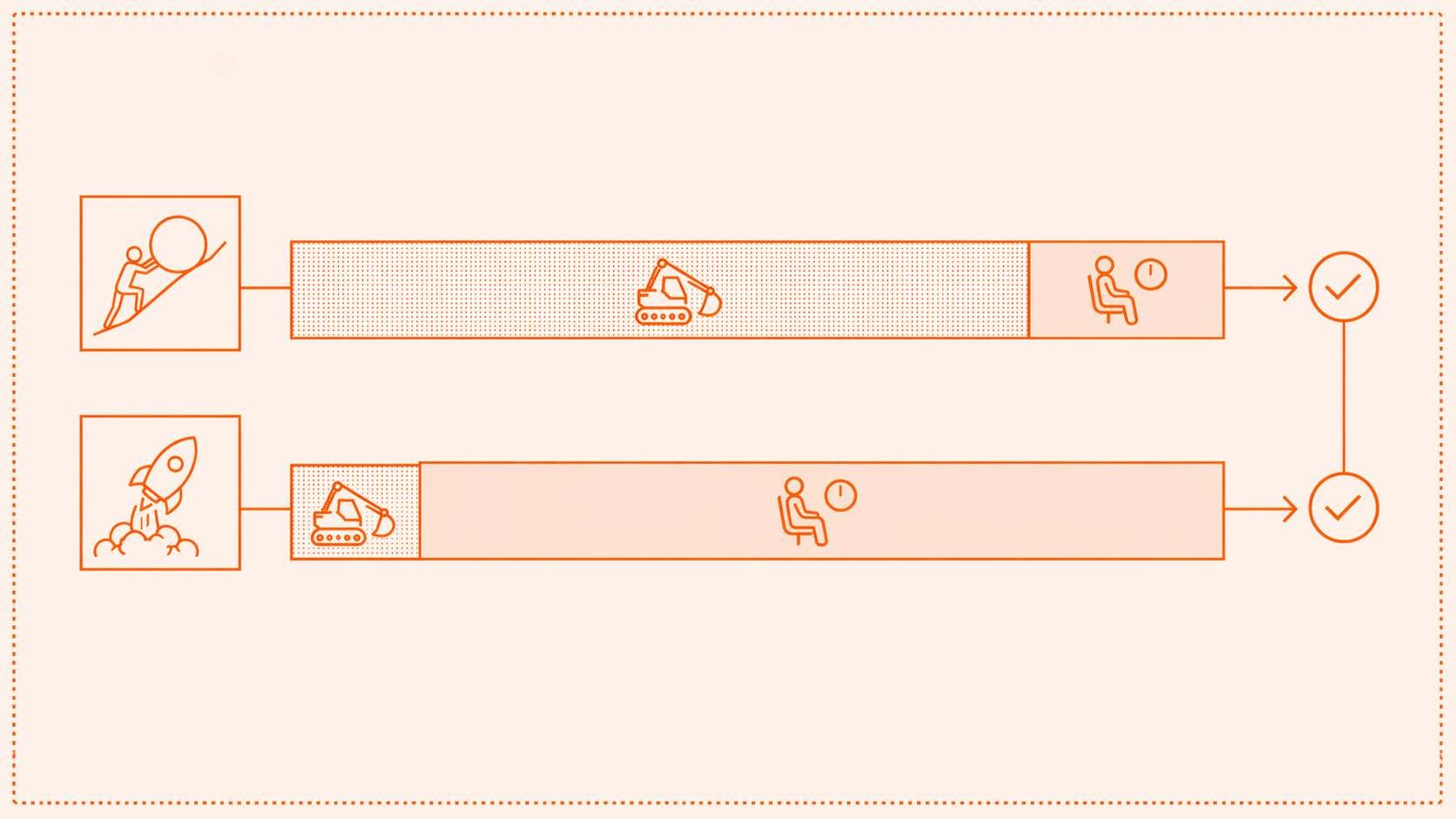

This is what constraint migration looks like - a task that used to be constrained by my own effort is now dependent on someone else's availability. Production has sped up significantly in the last 12 months - coding agents make application code quicker to develop, LLMs make documents quicker to write and feedback easier to incorporate. But project timelines haven't moved. If the build has sped up, something else is eating the time we've gained.

The natural assumption is that faster build means faster delivery. In a recent edition of his "The Batch" newsletter, Andrew Ng observed that "some [development] teams are pushing engineer:product manager (PM) ratios downward... to have engineers who know how to do some product work." For a solo consultant this isn't news - we've been doing this all along. But Ng's framing points at something that Eliyahu M Goldratt's Theory of Constraints named in 1984 - any system has exactly one binding constraint at any time, and removing it just reveals the next bottleneck. When AI accelerates delivery, work arrives at the next step faster than before. If that step involves detailed human cognition - a stakeholder who needs to fully understand something before signing it off, a senior developer who needs to take responsibility for a feature before putting it live - the speed of the previous step doesn't matter. It just makes the queue at the next gateway more visible.

Client approval has always been a potential constraint in my work. Sometimes, proposals will roll across a series of meeting agendas before being approved or turned down. The stuff I build for clients is rarely at the top of their job descriptions, so is often bumped down priority lists during busy times. But when delivery was slower this felt like normal project friction - it was absorbed into an overall timeline that everyone had already agreed was long. This isn't new. It's just the next bottleneck.

The honest response is that this doesn't feel like it should be my problem. I've done my bit. The delay is on their side. But the approval step sits inside my delivery system whether or not it's under my control. Something unreviewed for a fortnight is two weeks of everyone's time - the bottleneck doesn't care whose calendar it's on. Not all friction is addressable from one side, but that doesn't stop it being friction.

There are standard mitigations. Build approval checkpoints into the project structure. Establish expected response times at contract stage. Both are available in principle but retrofitting them to existing client relationships is awkward. And I do get it. This stuff often isn't top priority for them. But the asymmetry is real - if they're slow, I wait; if I'm slow, they notice.

There's an obvious counter here, which is that freed capacity might get spent on better work - more discovery, more iteration, higher-quality proposals going into the approval queue. That's possible. But it depends on the discipline of not starting the next thing, and the reality of solo practice is that you tend to earn more by having more clients.

There's no doubt that AI gives small teams and solo consultants like me a genuine increase in capacity. But that's not the same as throughput. And if freed build capacity gets spent starting new work while approvals on current work are pending, delivery timelines are not affected.

These tools are sold on the premise that faster delivery compresses project timelines. For many of us that won't happen, not because the tools don't work, but because the build was only the first bottleneck. So before investing in the next cutting-edge agent harness, the question worth asking is: where does a typical project actually slow down?

The answer may be in your sent folder, in an email that started "just following up."

If you would like to find out more about working effectively with AI, please do get in touch.