Successful AI-assisted work depends on a genuine division of labour between human judgment and machine capability. What's actually emerging is something different - humans absorbing liability for machine decisions, at scale, while the companies selling the tools express concern that you aren't using them enough.

Jensen Huang, CEO of Nvidia (an AI vendor), would like you to know that he will be deeply alarmed if his $500,000 engineers are not spending an additional $250,000 on AI tokens. This is not a threat, exactly. It is more of a performance review target, delivered in a leather jacket. The host of the All-in podcast had framed it as a question about being an effective employee. Clearly, an effective employee should be wolfing down those tokens - but to what end?

The academic theory goes something like this - a "Centaur" is a person and machine

working together with a strategic division of labour, allocating responsibilities based on the strengths of each entity.

The evidence that this works is real enough -

people who are screened for breast cancer by AI-supported radiologists are

less likely to develop aggressive cancers

before the next round of screening than those who are screened by radiologists alone.

Nobody serious disputes that AI assistance, done well, improves outcomes.

But the Centaur aspect is important -

"Women that participate in screening say they do not want to have AI as a standalone tool, they want to have a human in the loop."

Here is what the centaur model looks like from the ground. On March 5 2026, Amazon lost 6.3 million orders when an AI-authored config change caused a 6 hour outage. This was the latest in a series of AI errors across the business - in December 2025 their Kiro coding tool decided to "delete and recreate" an entire AWS Cost Explorer environment. In response, Amazon is mandating two-person reviews for all code changes and Director/VP-level audits of all production code changes. At Vercel, passing CI (Continuous Integration) is no longer a guarantee of safety. Vercel's internal guidance boils down to a checklist engineers run before shipping any agent-generated code: What does this do once rolled out? How can it hurt production or customers? And would you own an incident tied to it? The third question is doing a lot of work.

This is where the division of labour breaks down. The centaur model requires each party to be doing what they are best suited to. But what makes a human better at reviewing code they didn't write, cannot fully understand, and are now formally responsible for? Cognitive debt is real - you are not writing the code, there's far too much of it for you to fully understand, but you are responsible if it does bad stuff. What do we call this human in the loop? For Cory Doctorow, a "Reverse Centaur", a "squishy meat appendage for an uncaring machine". For Dan Davies, an "accountability sink". Your job is no longer to do the work. Your job is to take the blame if the machine screws it up.

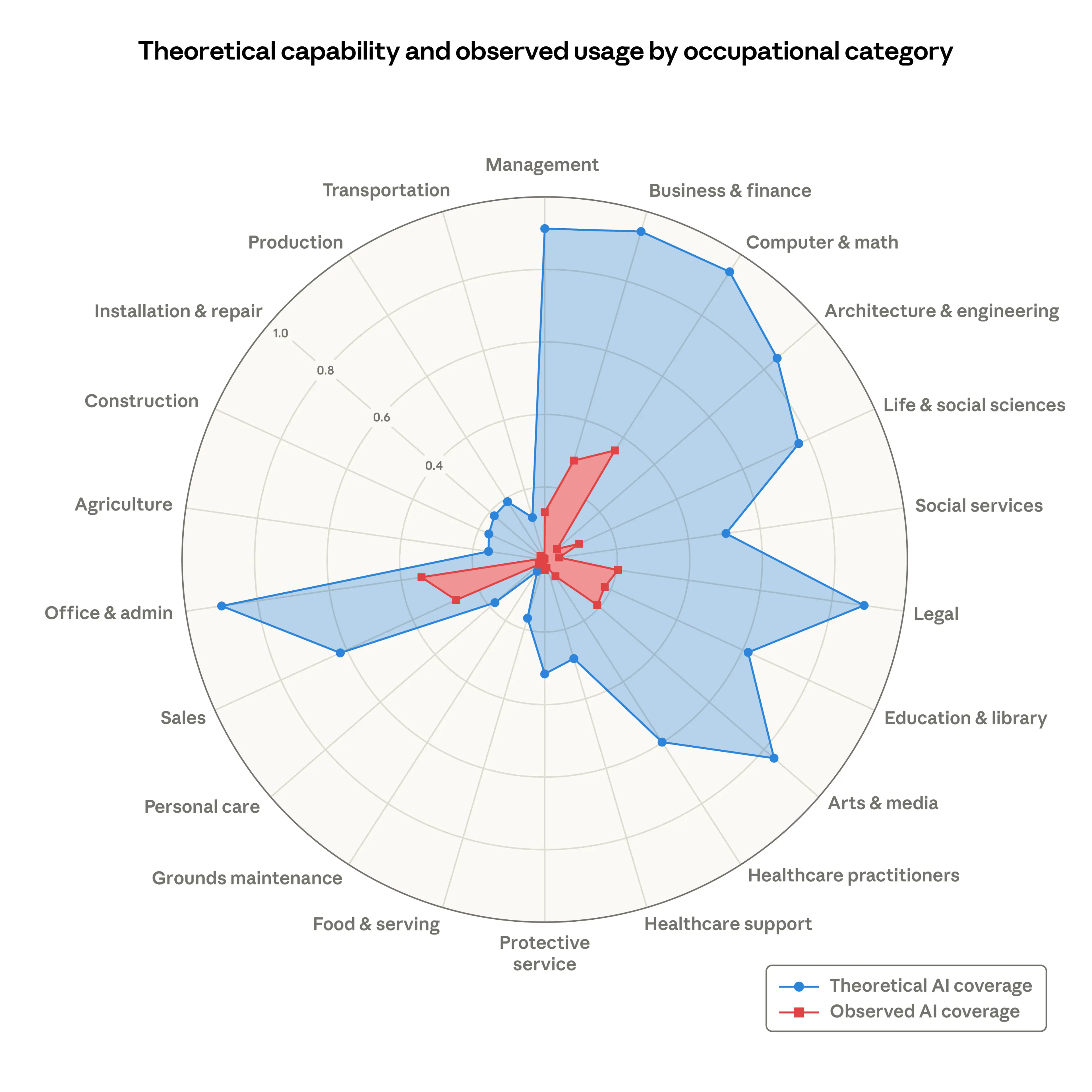

And if you'd like to know the scale of what is coming, in a recent article, Anthropic (another AI vendor), assessed the "AI displacement risk" across different occupational categories. Computing came top, but future theoretical AI coverage will affect Management, Business and Finance, Architecture and Engineering, Legal, Office and admin - anyone who earns their living in front of a computer. Does all this increase in token consumption mean we are selling more stuff? Jevons paradox suggests that cheaper production increases consumption - but do we really need more software? Or the same amount of software with fewer humans involved? What about Accountancy? Investment advice? Lawyering? Do we really need more Lawyering?

And what if the thing you produce manifests in the physical world? Already, there is evidence that many commercial building designs are never built - would AI agents producing even more designs lead to more building? How does that fit with real-world material supplies, global supply chains, or environmental targets? AI increases design output, but reality constrains the execution. The optimistic reading is abundance. The pessimistic reading is that we are automating the production of things nobody needed in the first place, at significant environmental cost, against a backdrop of supply chains that a brief glance at current events will confirm are not improving.

No wonder Jensen Huang is so alarmed. I think we're all alarmed. We all want to be effective employees. But what Anthropic's labour research and Nvidia's token-budget alarm share is that they both benefit enormously from you spending money on AI, and they are both very concerned that you are not spending enough. The Centaur is a compelling model when it works. The Reverse Centaur is what you get when the horse gets the credit and you get the incident report.

If you would like to find out more about the implementation of AI, please do not hesitate to get in touch.