This article explores how turning structured knowledge into reusable “skills” lowers the barrier to creating useful tools, shifting the focus from coding to clear thinking — and opening up a new medium for professionals to distribute what they know.

Emil Kowalski is a designer who writes about animations. Spring curves, easing functions, the micro-decisions that make interfaces feel alive. His writing is excellent. But recently he published something that stopped me mid-scroll.

Not a code library. Not a design system. A small text file, written in English, that lets anyone using Claude have their animations reviewed against Emil's own standards. His years of craft, packaged as something you can plug directly into an AI agent and run.

Emil's skill doesn't teach you about animations, it bypasses the entire transfer problem. You plug it in, and your AI agent has the knowledge. Not a summary of it - the actual decision-making framework.

What's actually happening here is a change in how expertise travels.Think about the lifecycle of professional knowledge. Someone learns something through years of practice. They write a blog post, give a talk or publish a book. You read it and you try to apply it. You may get some of it right, but you miss the edge cases and you forget the nuances within a week.

The bottleneck was never the knowledge itself - it was the transfer. Reading about how to assess an investment fund is not the same as assessing one, in the same way that reading about how an experienced engineer categorises facade defects is not the same as doing it yourself on site.

Skills dramatically reduce the transfer problem. The knowledge goes from the expert's head into a structured text file, and from that file directly into an agent that can execute it on your behalf.

I read an article in the Hargreaves Lansdown magazine about how their analysts evaluate funds for the Wealth Shortlist. It described their methodology step by step: peer group context, style drift analysis, performance quality, income sustainability, cost assessment. All solid, structured, procedural knowledge. The kind of thing you bookmark, tell yourself you'll come back to, and never open again.

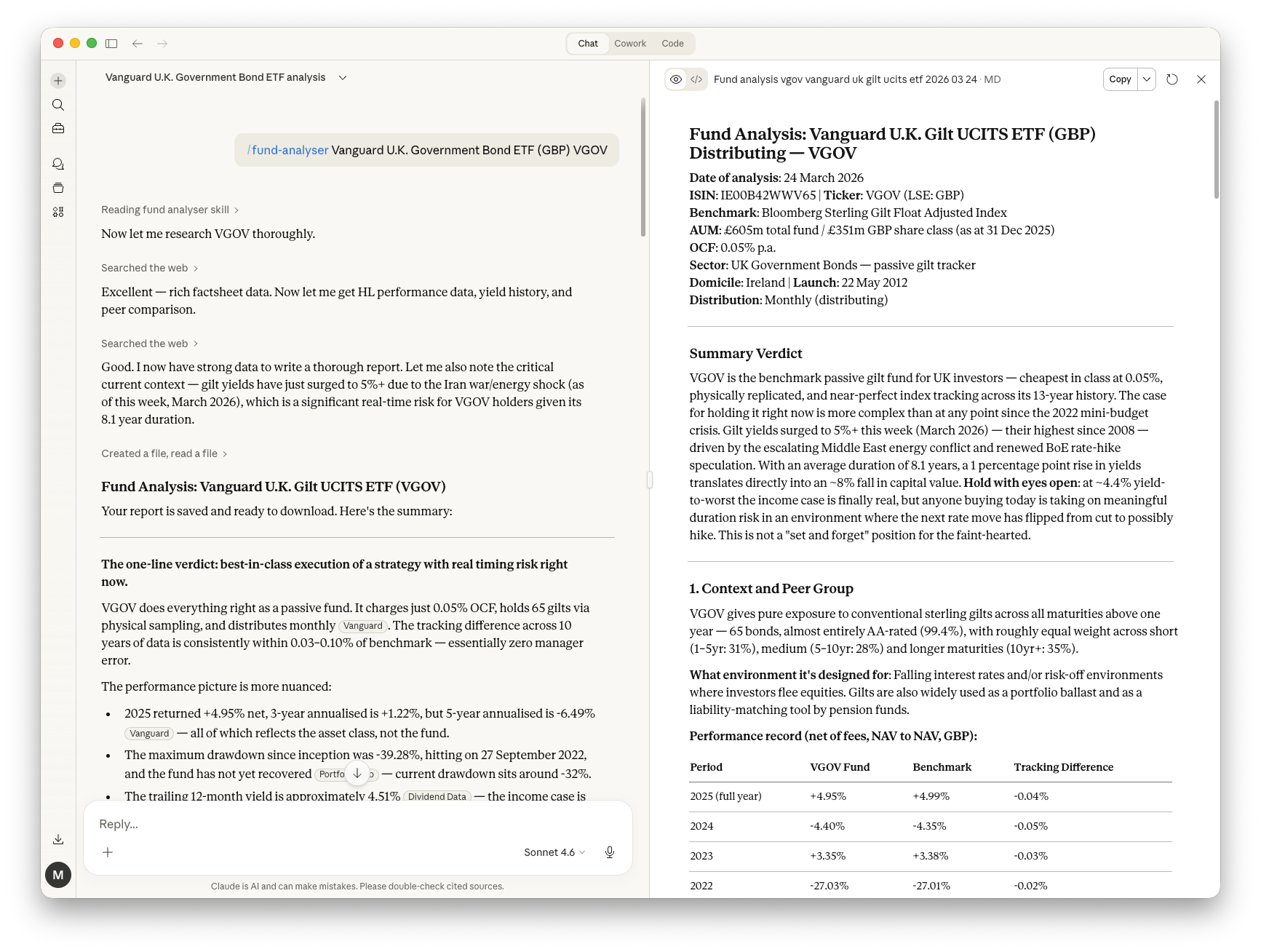

Instead, I fed the article text to Claude's Skill Creator with a simple prompt:

create a skill that follows this methodology to analyse any fund I name.

Update the skill to compare the fund's performance against

popular passive index trackers, and include the fees.

/fund-analyser {fund name}

A fifteen-minute magazine read becomes a reusable analytical tool. No code written and no programming knowledge required.

Skills are written in English, not code. (Although skills can include code and all sorts of other files as examples or deeper context). Before this, packaging expertise into something easily reusable by others meant building software - a Python script, a web tool or an API. That limited knowledge-packaging to people who could produce code.

With skills, clarity of thinking becomes the bottleneck. Investment analysts, structural engineers, architects, teachers, animators — anyone who can clearly articulate a repeatable decision process can now create a tool that others can plug in and use immediately.

The mistake many people make is thinking this is an AI feature. It's closer to a new medium for knowledge distribution. Blog posts let you share what you know - skills let you share the means to do it.

What would a structural engineer's "site visit report skill" look like? You feed it photos and notes from a facade inspection. It structures a report following the engineer's standard format: condition grading, defect categorisation, priority ranking, recommended next steps. The expertise is knowing what to look for, how to categorise what you find, and what language to use so the client and contractor both understand the implications. That judgment is the kind of thing a skill can encode.

What about an architect's "planning application skill"? Give it a site address and proposed development. It checks local planning policy, flags likely objections, identifies pre-application requirements.

These aren't product roadmap fantasies. They're text files. Someone with the right domain expertise could build them this afternoon.

A recent paper frames it well:

The skill-based architecture transforms the question from "how do we train a model to perform task X?" to "how do we provide a model with executable procedural knowledge for task X?"

The first question requires machine learning engineers. The second requires people who are good at their jobs and can clearly describe what they do.

That's a much larger set of people. And it means the next wave of useful AI tools won't necessarily come from tech companies building features. It'll come from domain experts in every field, encoding what they know into skills that others can plug in and run.

Emil Kowalski checking your animations. Hargreaves Lansdown's fund methodology running on your portfolio. A structural engineer's judgment applied to your site inspection report.

This quiet little text file, more transferable than any tutorial, more durable than any talk. Not advice you have to interpret and apply, but expertise you plug in and run.

If you would like to find out more about skills and using AI agents, please do not hesitate to get in touch.